This challenge has the goal of identifying dermoscopic features, but provide the data in a way that we hope is significantly easier to parse and submit. Binary masks will be used to identify where features are present in lesions, and the goal will be to automatically generate these masks. Evaluation will be the same as the current Part 1.

Goal

Participants are challenged to submit automated segmentations of clinical dermoscopic features on supplied lesion images.

Data

Lesion dermoscopic feature data includes the original lesion image, paired with expert segmentations of the location of the "globules" and "streaks" dermoscopic features.

Training Data

The Training Data file is a ZIP file, containing 807 lesion images in JPEG

format. All lesion images are named using the scheme ISIC_<image_id>.jpg,

where <image_id> is a 7-digit unique identifier. EXIF tags in the lesion

images have been removed; any remaining EXIF tags should not be relied upon to provide

accurate metadata.

Training Ground Truth

Download Training Ground Truth

The Training Ground Truth file is a ZIP file, containing 1614 binary mask

images in PNG format. All masks are named using the scheme ISIC_<image_id>_<feature_name>.png,

where <image_id> matches the corresponding Training Data image for the

mask and <feature_name> is one of globules or

streaks. All mask images will have the exact same dimensions as their

corresponding lesion image. Mask images are encoded as single-channel (grayscale) 8-bit PNGs

(to provide lossless compression), where each pixel is either:

0: representing the absence of a given dermoscopic feature255: representing the presence of a given dermoscopic feature

Notes

Feature data were obtained from expert superpixel-level annotations, with cross-validation from multiple evaluators.

The dermoscopic features of "globules" and "streaks" are not mutually exclusive (i.e. both may be present within the same spatial region or superpixel). Additionally, a dermoscopic feature must only be present anywhere within a superpixel region for the superpixel to be considered positive for that feature; it is not required that the dermoscopic feature fill the entire superpixel region.

Relevant information to automatically determine the presence of a dermoscopic feature at a given location may include contextual information of the surrounding region.

Participants are not strictly required to utilize the training data in the development of their lesion segmentation algorithm and are free to train their algorithm using external data sources.

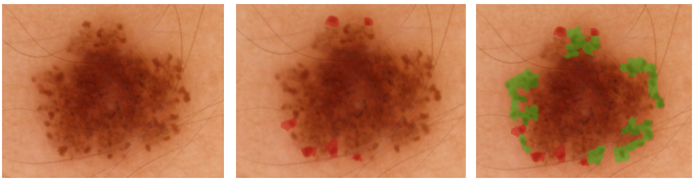

Dermoscopic Feature Tutorial

The following tutorial is designed to assist participants in understanding the underlying semantics of the "globules" and "streaks" dermoscopic features:

Submission Format

Test Data

Given the Test Data file, a ZIP file of 335 lesion images of the exact same formats as the Training Data, participants are expected to generate and submit a file of Test Results.

The Test Data file should be downloaded via the "Download test dataset" button below, which becomes available once a participant is signed-in and opts to participate in this phase of the challenge.

Test Results

The submitted Test Results file should be in the exact same format as the Training Ground Truth file. Specifically, the Test Results file should be a ZIP file of 670 binary mask images in PNG format. Each mask should contain the participant's best attempt at a fully automated dermoscopic feature segmentation of one of the "globules" or "streaks" features in the corresponding image in the Test Data. Each mask should be named and encoded according to the conventions of the Training Ground Truth.

Submission Process

Shortly after being submitted, participants will receive a confirmation email to their registered email address to confirm that their submission was parsed and scored, or to provide a notification that parsing of their submission failed (with a link to details as to the cause of the failure). Participants should not consider their submission complete until receiving a confirmation email.

Multiple submissions may be made with absolutely no penalty. Only the most recent submission will be used to determine a participant's final score. Indeed, participants are encouraged to provide trial submissions early to ensure that the format of their submission is parsed and evaluated successfully, even if final results are not yet ready for submission.

Evaluation

Submitted Test Results feature segmentations will be compared to private (until after the challenge ends) Test Ground Truth. The Test Ground Truth was produced from the exact same source and methodology as the Training Ground Truth (both sets were randomly sub-sampled from a larger data pool).

Submitted segmentations will be compared using a variety of metrics, all computed at the level of single pixels, including:

However, participants will be ranked and awards granted based only on the Jaccard index.

Some useful resources for metrics computation include: